Space Efficient Encrypted Backups

I've been seeing more interest lately in performing off-site backups of personal information. I've been doing this for quite a while, and although I've described it to a few people, I can't find anything I've actually written up where people can read about it without my presence, so I thought I'd throw something together real quickly.

The Goal

The goal is very simple. I want all of my important data backed up and out of my machine room as quickly as I can get it, as cheaply as possible. I also want to keep an unreasonably large number of full backups online.

The Solution

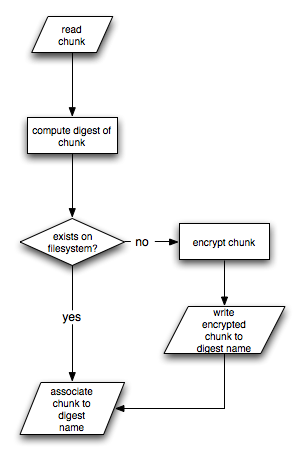

The excessively large flow chart to the right shows the bulk of the algorithm that handles the space efficient storage and encryption.

The excessively large flow chart to the right shows the bulk of the algorithm that handles the space efficient storage and encryption.

Every stream is broken into chunks of a specific size (configurable by stream). Each chunk is digested (currently by MD5) and we look in the digest storage to see if the given digest is already stored. If it is not, the block must be encrypted and stored in the digest storage. Once a digest is stored, it may be referenced by many backups both over time an over different tables that may contain identical information.

The encryption algorithm of choice is GPG encrypted to at least two public keys whose corresponding private keys are not available on the server performing the backups.

The Result

The result meets the goal very nicely. There are daily backups that each represent the entire state of all of my databases as of that point in time, they're transferred out of the house within minutes of completion, and they are unreadable to anyone who hasn't either broken GPG or mugged me and stole my GPG key and the AES password for the disk image in which it's stored.

Today, I've got 78 days worth of backups online -- each one a full dump. The smallest is 5.2GB, and the largest is 5.9GB. A difference of... 1.1GB:

purple:/data/bak/purple/db 517> du -sh 20060609 5.2G 20060609 purple:/data/bak/purple/db 518> du -sh 20060826 5.9G 20060826 purple:/data/bak/purple/db 519> du -sh 20060609 !$ du -sh 20060609 20060826 5.2G 20060609 1.1G 20060826

That's an unusual gap, though. The difference between today's 5.9GB and yesterday's 5.9GB is 2.3MB. That's how much gets transferred offsite each night. Tomorrow's will probably a bit more since a lot of data is being added today, but that's what we want.